Predictive credit analytics uses machine learning to assess creditworthiness faster and with more data than traditional methods. It incorporates alternative data like rent and utility payments, making credit accessible to more people. However, ethical challenges like bias, transparency, data privacy, and accountability must be addressed to ensure compliance with laws like the Equal Credit Opportunity Act (ECOA) and upcoming regulations like the EU AI Act (effective August 2026). Key practices include:

- Bias Mitigation: Test models for indirect discrimination (e.g., zip codes acting as proxies for race).

- Transparency: Provide clear, specific reasons for credit decisions, even when AI models are complex.

- Data Privacy: Use techniques like machine unlearning to comply with privacy laws like GDPR and CCPA.

- Accountability: Regular audits, human oversight, and clear governance are critical.

Four Core Ethical Principles in Predictive Credit Analytics

Open Views 24 | Open finance data and ethical AI the real path to financial inclusion

sbb-itb-b840488

Core Ethical Principles in Predictive Credit Analytics

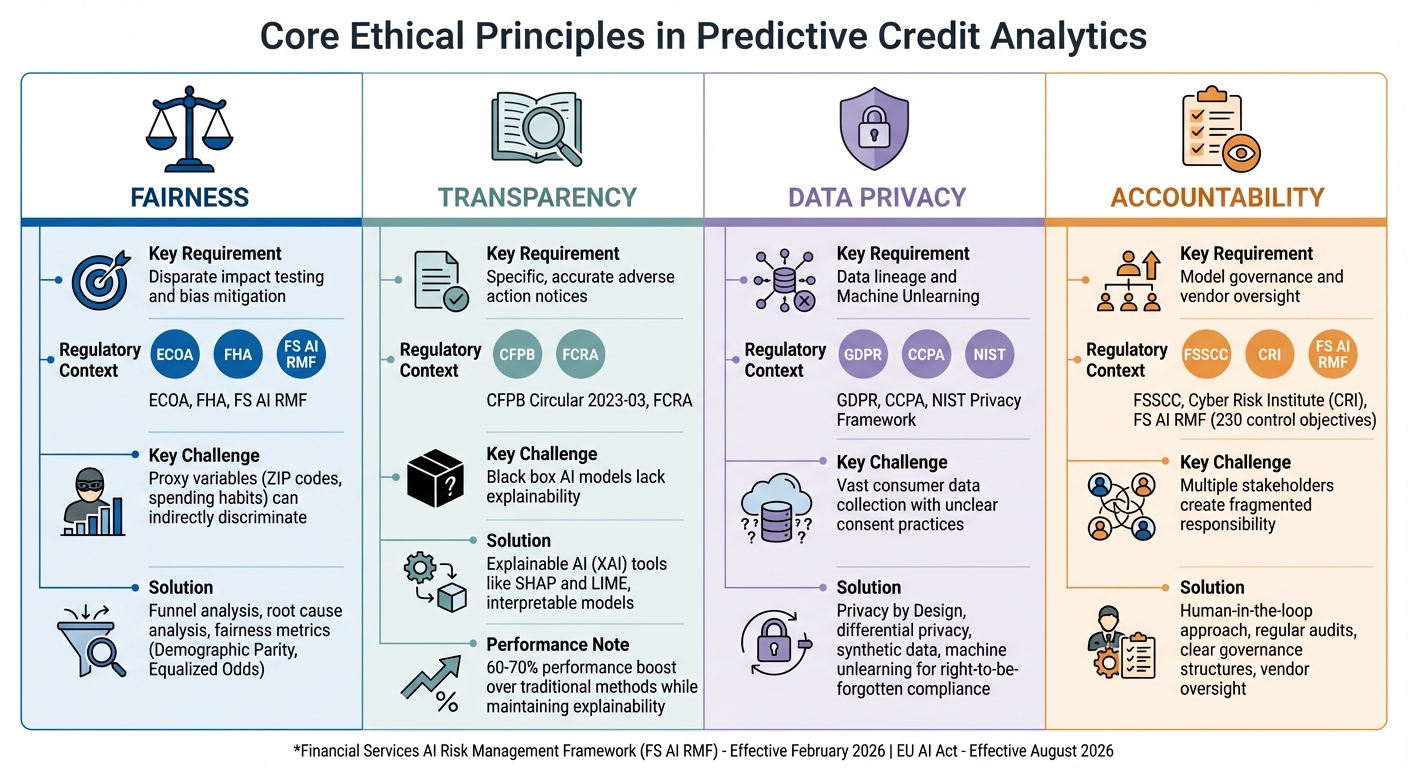

This section highlights the key ethical principles that form the backbone of predictive credit analytics. These principles – fairness, transparency, data privacy, and accountability – are not just theoretical ideals. They are firmly grounded in regulatory frameworks like the Financial Services AI Risk Management Framework, introduced in February 2026. This framework outlines 230 control objectives covering governance, data integrity, and consumer protection. Ignoring these principles can lead to legal repercussions, reputational harm, and the erosion of consumer trust.

Fairness and Equal Treatment

Federal law is clear: predictive credit models cannot discriminate based on protected attributes such as race, gender, age, or religion. The Equal Credit Opportunity Act (ECOA) applies universally to all credit decisions, regardless of the technology behind them. However, achieving fairness goes beyond excluding prohibited variables. Even seemingly neutral factors, like zip codes or spending habits, can indirectly serve as proxies for protected characteristics, leading to disparate impact.

To tackle this, organizations must proactively identify protected groups and monitor fairness intentionally, rather than waiting for issues to arise. When disparities are detected, conducting a root cause analysis is crucial. For instance, if marital status influences a credit denial, it’s important to confirm whether it genuinely predicts credit risk or merely acts as a stand-in for a protected trait. Studies show that fairness-focused models can significantly reduce bias, though this often comes with minor trade-offs in accuracy. In one example, a less biased credit model saw a 3.4% drop in rank-ordering accuracy but experienced a 20% decline in calibration accuracy.

Funnel analysis is another valuable method. By examining the AI’s raw outputs, lender thresholds, human interventions, and final decisions, organizations can pinpoint where bias originates – whether it’s in the model itself or in human interactions with it. As Julie Lee from Experian emphasizes:

"The complexity of machine learning models requires rigorous evaluation to ensure fair lending".

Ensuring fairness in model inputs lays a solid foundation for building transparency in credit decision-making.

Transparency and Clear Explanations

Transparency isn’t just a best practice – it’s a legal requirement. Laws like ECOA and the Fair Credit Reporting Act (FCRA) mandate that lenders provide specific and accurate reasons for credit denials, even when decisions are made by complex algorithms. CFPB Director Rohit Chopra reinforced this in 2023:

"Technology marketed as artificial intelligence is expanding the data used for lending decisions, and also growing the list of potential reasons for why credit is denied. Creditors must be able to specifically explain their reasons for denial. There is no special exemption for artificial intelligence".

This means lenders must include detailed, model-specific explanations in adverse action notices. For example, if an AI model denies credit based on spending habits, the notice should specify the particular behaviors involved, rather than broadly citing "purchasing history". Despite the challenges, well-designed machine learning (ML) models can achieve a 60% to 70% performance boost over traditional methods while maintaining explainability.

Organizations have two main approaches to transparency: building interpretable models from the ground up or using post hoc tools like SHAP or LIME to explain "black box" systems. If the latter option is chosen, these tools must be rigorously tested to ensure they accurately reflect the model’s logic. Stratyfy highlights this dual responsibility:

"Transparency is about understanding how a model is working. But what users also require is the ability to change how a model works in order to address these inherent issues with biased data, or biases within a model".

While transparency builds trust, safeguarding consumer data is equally critical.

Data Privacy and Security

Predictive credit models depend on vast amounts of consumer data, making robust privacy measures essential. Organizations must ensure data accuracy and adopt "Privacy by Design" principles, which embed privacy-enhancing technologies like differential privacy and synthetic data directly into their systems.

A growing trend in this area is machine unlearning. This technique allows specific data points to be removed from models to comply with "right-to-be-forgotten" regulations, such as GDPR and CCPA, without requiring a full system retrain. This approach also mitigates disgorgement risk, where regulators could demand the destruction of models built on improperly sourced or discriminatory data.

Accountability and Oversight

Accountability is non-negotiable when it comes to predictive credit analytics. A "human-in-the-loop" approach ensures that disparities in model outcomes are scrutinized to determine whether they stem from legitimate business factors (like high debt) or reflect illegal bias. This responsibility becomes even more critical when third-party AI platforms are involved. Lenders must oversee these systems by reviewing documentation, data usage disclosures, and governance controls.

Vendor artifacts and model documentation should serve as dynamic inputs for continuous monitoring. Regular validation is essential to confirm that the reasons provided for credit denials align with the actual drivers in the AI model, reducing legal risks. Amy S. Mushahwar of Lowenstein Sandler LLP underscores the importance of proactive oversight:

"The FS AI RMF functions as an operational architecture standard, and it forces institutions to confront what we have called the ‘1999 problem’ before supervisory expectations harden".

The "1999 problem" refers to outdated technology infrastructures struggling to keep up with the demands of modern AI decision-making in tightly regulated environments.

| Principle | Key Requirement | Regulatory Context |

|---|---|---|

| Fairness | Disparate impact testing and bias mitigation | ECOA, FHA, FS AI RMF |

| Transparency | Specific, accurate adverse action notices | CFPB Circular 2023-03, FCRA |

| Privacy | Data lineage and "Machine Unlearning" | GDPR, CCPA, NIST Privacy Framework |

| Accountability | Model governance and vendor oversight | FSSCC, Cyber Risk Institute (CRI) |

Ethical Challenges in Predictive Credit Analytics

Building on the ideas of fairness and transparency, this section dives into the real-world challenges that threaten these principles. While predictive analytics in credit decisions offers promise, it also presents ethical dilemmas that can’t be ignored. These aren’t abstract concerns – many have led to lawsuits and settlements. Understanding these issues is key to creating systems that aim to be fairer.

Algorithmic Bias and Discrimination

One of the toughest challenges is dealing with algorithmic bias. This happens when models trained on historical data inherit biases or reflect systemic inequalities. Even when sensitive attributes like race or gender are excluded, algorithms can still infer these traits through proxies – factors like ZIP codes, education levels, shopping patterns, or even surnames can act as stand-ins.

For instance, research found that female applicants were assigned credit scores 6–8 points lower than males in similar situations. Large language models (LLMs) have also shown troubling patterns, such as recommending higher interest rates or denying loans outright to Black applicants compared to white applicants with identical profiles. These aren’t isolated cases. In May 2024, SafeRent Solutions settled a $2.2 million class-action lawsuit for its tenant screening algorithm, which disproportionately penalized Black and Hispanic voucher users by overemphasizing credit history and debt.

The problem isn’t limited to individual companies. In 2024, the DOJ and CFPB secured an $8 million settlement from Fairway Mortgage for redlining in Birmingham, AL. The company was found to market almost exclusively to white neighborhoods, with less than 3% of its ads targeting Black communities. Black borrowers were approved at only 43% the rate of white borrowers. Similarly, Ameris Bank faced a $9 million penalty in 2023 for avoiding lending in predominantly Black and Latino neighborhoods in Jacksonville, FL.

Bias also creates damaging feedback loops. When loans are denied to certain communities, it stifles local economic growth, which can lower future credit scores and reinforce the cycle. Julie Lee from Experian explains:

"Machine learning models can inadvertently introduce or perpetuate biases, especially when trained on historical data that reflects past prejudices".

To tackle these issues, companies need to conduct disparate impact analyses, comparing outcomes for protected groups against others. Tools like Demographic Parity, Equalized Odds, and Predictive Parity can help measure fairness. Regular data audits are also essential to catch and address biased labels before they affect real-world decisions.

But bias isn’t the only hurdle – transparency in how these models work is another major concern.

The Black Box Problem

Many machine learning models operate as "black boxes", making it difficult to understand why certain credit decisions are made. Despite this complexity, federal regulations require transparency. CFPB Director Rohit Chopra made it clear:

"Companies are not absolved of their legal responsibilities when they let a black-box model make lending decisions".

This lack of clarity can erode trust. Borrowers deserve clear and specific reasons for credit denials, not vague responses based on data trends. The CFPB has reinforced that creditors must provide accurate explanations for denials, even if the models themselves are hard to interpret.

To address this, lenders are turning to explainable AI (XAI) methods to make model decisions more understandable. They are also encouraged to analyze decision funnels to identify whether disparities stem from the AI model, business rules, or manual interventions. Generic denial forms that fail to reflect the nuanced reasons behind credit decisions are no longer acceptable.

On top of these issues, the ethical use of consumer data introduces further challenges.

Data Usage and Consent

The way customer data is used raises significant ethical questions. Companies collect massive amounts of data, but unclear practices often prevent consumers from giving fully informed consent.

Privacy concerns are growing. The Financial Services AI Risk Management Framework (FS AI RMF), introduced in February 2026, outlines 230 control objectives focused on governance, data handling, and consumer protection. Regulators now demand accountability at every stage of the data lifecycle – from ingestion to deployment.

A new concept gaining traction is "machine unlearning", which allows specific data to be removed from trained models to comply with privacy laws like GDPR and CCPA. This not only safeguards privacy but also helps avoid drastic regulatory measures like model destruction. As Rohit Chopra emphasized:

"The law gives every applicant the right to a specific explanation if their application for credit was denied, and that right is not diminished simply because a company uses a complex algorithm that it doesn’t understand".

To stay ahead, organizations should embed ethical and privacy controls into their processes from the start, rather than trying to patch them in later. Automated tools for tracking data lineage and version control can ensure that training datasets respect privacy standards from the outset. When working with third-party AI vendors, contracts should include clear provisions for transparency and compliance with adverse action notice requirements.

These steps can help organizations navigate the ethical minefield of predictive credit analytics, but they require consistent effort and accountability.

Best Practices for Ethical Predictive Analytics

Addressing the challenges of bias and opacity in predictive analytics requires well-defined actions and processes. Establishing structured methods can improve audit practices, enhance model transparency, and ensure fairness in feature selection.

Conducting Fairness Audits

Fairness audits should be integrated throughout the machine learning process – not just as a final step. This includes actions like pre-processing data to address imbalances, incorporating fairness constraints during model training, and fine-tuning decision thresholds in post-processing stages.

One effective approach is the Decision Funnel analysis, which helps identify where disparities arise – whether from the algorithm itself, human intervention, or business rules. By isolating these sources, teams can pinpoint and address specific issues.

Another key strategy involves testing a Less Discriminatory Alternative (LDA). This method evaluates whether an alternative model or feature set can maintain predictive accuracy while reducing adverse impacts on protected groups. For example, research conducted in July 2023 by FinRegLab and the Stanford Graduate School of Business demonstrated that automated debiasing techniques often yield greater fairness improvements with minimal accuracy tradeoffs compared to traditional methods. This shows that improving fairness doesn’t have to come at the cost of performance.

Implementing Explainable AI (XAI)

Explainability is no longer a "nice-to-have" – it’s essential. As Julie Lee from Experian stated:

"ML-powered models may be more predictive, but regulatory requirements and fair lending goals require lenders to use explainable models".

Explainable AI (XAI) techniques provide clarity on how a model arrives at its decisions, making the process more transparent for internal teams, regulators, and consumers. Explanations can be categorized as global (offering insights into overall model behavior) or local (justifying specific decisions, like why a loan application was denied).

To avoid misleading interpretations, it’s recommended to use multiple post-hoc techniques, such as SHAP and LIME, which provide detailed insights into model behavior. Different audiences require tailored explanations: regulators might need technical logs, while consumers benefit from simple, clear reason codes.

The results can be transformative. For instance, in October 2025, Figure, a lending company, used explainable models powered by over 90 machine learning algorithms to assess creditworthiness and borrower intent. This approach helped them rank among the top three HELOC lenders in the U.S..

Reducing Feature Selection Bias

While explainable AI improves transparency, unbiased feature selection is critical for fairness. Even when sensitive attributes like race or gender are excluded, models can still discriminate through proxy variables – indirect indicators like ZIP codes or employment history. Identifying and removing these proxies is vital.

An analysis by FI Consulting of 14 million Home Mortgage Disclosure Act (HMDA) applications revealed that better feature selection and hyperparameter tuning could raise fairness metrics, such as the Adverse Impact Ratio (AIR), without sacrificing model performance. As Graydon Goss from FI Consulting explained:

"Improved feature selection and hyperparameter tuning best increased model fairness (AIR) with negligible impact on model performance (AUC)".

To further reduce bias, companies should regularly audit datasets to correct demographic imbalances using methods like oversampling or weighting. Implementing fairness metrics like Demographic Parity, Equal Opportunity, and Disparate Impact analysis ensures equitable outcomes across different groups. Additionally, selecting features based on logical business relevance helps maintain model simplicity and prevents overfitting.

Human oversight remains essential. In 2023, iTutorGroup, an online education company, faced legal action from the U.S. Equal Employment Opportunity Commission (EEOC) for using an AI system that excluded applicants based on age. This case highlights the importance of having human reviewers monitor AI decisions and establish clear protocols for intervention when necessary.

Regulatory and Compliance Requirements

Federal and state regulations play a crucial role in shaping ethical practices in predictive credit analytics. These laws are designed to protect consumers and guide how companies develop, implement, and manage their models.

Following Fair Lending Laws

The Equal Credit Opportunity Act (ECOA) is the cornerstone of U.S. regulations for predictive credit analytics. It requires lenders to provide clear and understandable explanations for credit decisions, ensuring applicants have the right to know why a decision was made. This means companies must avoid using algorithms that produce decisions without justification.

Although the General Data Protection Regulation (GDPR) is an EU regulation, it has a global influence, especially on U.S. companies operating internationally. GDPR requires "meaningful disclosure of the underlying logic" behind automated decision-making systems. The Financial Stability Board has also cautioned:

"Lack of interpretability and auditibility of AI and ML methods could become a macro-level risk".

For health-related data, HIPAA enforces strict guidelines on its collection and usage. Additionally, the EU AI Act, set to take effect in August 2026, identifies credit scoring as a high-risk activity, requiring companies to maintain technical documentation and conduct thorough risk assessments. U.S. companies should pay close attention to this development, as it may influence domestic regulatory trends.

These regulations not only establish consumer protections but also emphasize the need for robust internal oversight.

Creating Ethical Review Frameworks

Internal oversight mechanisms can provide an additional layer of protection beyond what regulations require. Setting up independent oversight committees is one effective strategy to ensure AI systems do not unintentionally harm vulnerable groups. These committees should bring together diverse expertise, including data scientists, legal professionals, ethicists, and representatives from affected communities.

A strong oversight framework also depends on clear accountability structures. When multiple stakeholders – such as data providers, model developers, and end-users – are involved, it’s crucial to clearly define who is responsible for the outcomes of a model. Without this clarity, accountability can become fragmented, making it harder to address issues when they arise.

Redress mechanisms are another critical element. People impacted by automated credit decisions should have a formal process to challenge those outcomes and request corrections. Before rolling out high-risk systems, companies should perform Fundamental Rights Impact Assessments to identify potential harm to consumer rights and outline mitigation strategies. Keeping a "model evidence pack" that includes architectural diagrams, data sources, and validation results can also help meet regulatory audit standards.

While internal frameworks are essential, professional ethical standards provide additional guidance for responsible AI development.

Professional Standards and Guidelines

In addition to legal requirements, professional organizations offer ethical guidelines for responsible AI development. The European Commission’s "Ethics Guidelines for Trustworthy Artificial Intelligence" lists seven key criteria, including transparency, accountability, and respect for human agency. While these guidelines aren’t legally binding in the U.S., they are widely regarded as industry best practices.

Other organizations, such as the Association for Computing Machinery (ACM) and the Institute of Electrical and Electronics Engineers (IEEE), have developed codes of ethics that can serve as a foundation for internal accountability. These standards are especially helpful in areas where regulations are less explicit, ensuring that ethical considerations remain a priority during the development and deployment of predictive analytics systems.

Using Ethical Predictive Analytics in Trade Credit Underwriting

Trade credit underwriting is all about balancing effective risk management with maintaining strong customer relationships. Ethical predictive analytics takes this process a step further by ensuring that risk assessment aligns with principles of fairness and transparency. By using these tools, businesses can make smarter credit decisions while preserving trust with their trading partners.

Balancing Risk Assessment and Customer Trust

Machine learning has transformed how trade credit decisions are made. These models can uncover patterns in enormous datasets that traditional methods might overlook. For example, by analyzing over 5 million financial statements, these tools can predict a company’s solvency and default risk over the next year. Guillaume Huguet, Data Lab Director at Coface, highlights the value of combining advanced technology with expert insights:

"By combining our world-class expertise with the wealth of our global data assets and the power of cutting-edge technologies (artificial intelligence, data science), our clients benefit from more intelligent solutions that inform their decisions and enable them to manage commercial risks in a more predictive way."

In trade credit underwriting, transparency is especially important because of the unique relationships between businesses and their trading partners. Explainable AI (XAI) plays a key role here, offering clear and specific reasons behind credit decisions. For instance, it can differentiate between deliberate fraud and genuine financial hardship by analyzing indicators like First-Payment Default (FPD). Ethical underwriters don’t just deny credit – they provide actionable advice to help customers improve their creditworthiness, which helps maintain strong business relationships.

Fairness is another critical factor. Metrics like Disparate Impact (DI) and Standardized Mean Difference (SMD) help ensure that models don’t unintentionally discriminate against certain groups. Regular audits of these metrics keep the system fair while still protecting businesses from legitimate credit risks.

On top of this, integrating insured receivables into the process further strengthens the underwriting framework, reducing financial risk and enabling more informed credit decisions.

Using Insured Receivables for Growth

Incorporating insured receivables into predictive analytics provides a way to manage risk while supporting growth. Trade credit insurance changes the game by adjusting the loss metrics used in underwriting. For example, when insured receivables are factored in, the Loss Given Default (LGD) component in the Expected Loss formula (EL = PD × LGD × EAD) is reduced. This allows businesses to approve higher credit limits for customers who might otherwise be considered too risky.

The financial benefits are clear. Without trade credit insurance, a $100,000 bad debt would require $1,000,000 in new sales to offset the loss, assuming a 10% profit margin. Insurance acts as a safety net, enabling businesses to approve credit for marginal customers – those on the borderline of risk – without exposing themselves to unacceptable financial losses.

Educational resources, like those from CreditInsurance.com, help businesses understand how to integrate insured receivables into their predictive analytics systems. When combined with ethical AI models, this approach allows companies to expand their credit offerings responsibly. Real-time customer data and "weak signals" from trade credit platforms can be fed into predictive algorithms via APIs, enabling credit scores to adjust dynamically as market conditions evolve.

This approach benefits everyone involved. Customers gain access to better payment terms and higher credit limits, while businesses safeguard their financial stability and maintain consistent credit limits – even during economic uncertainty. By blending ethical AI with trade credit insurance, businesses can strike a balance between growth and responsible risk management.

CreditInsurance.com Resources for Ethical Practices

CreditInsurance.com offers a range of tools and insights aimed at promoting ethical credit practices and financial security. From educational materials to risk management strategies, the platform equips businesses with resources that uphold ethical standards while fostering financial stability.

Educational Tools for Businesses

To help businesses adopt ethical credit practices, CreditInsurance.com provides a variety of educational resources. These include in-depth customer investigation guides, risk management blogs, and newsletters packed with data-driven insights. Additionally, businesses can access macroeconomic analyses, country risk assessments, and sector-specific reports to stay informed about potential commercial risks across their partnerships.

The platform also emphasizes the importance of Explainable AI in ensuring fairness in lending. As Julie Lee from Experian explains:

"Fair lending is a cornerstone of ethical financial practices, prohibiting discrimination based on race, color, national origin, religion, sex, familial status, age, disability, or public assistance status".

CreditInsurance.com helps businesses evaluate risks ethically by providing methodologies that analyze how variables influence machine learning models. Tools like the AI Fair Lending Funnel Analysis further assist in identifying disparities at key stages of credit decisions. This structured approach ensures businesses can align their practices with ethical standards.

Protection Against Financial Risks

The platform also offers solutions for managing commercial and political risks, such as bankruptcy, default, and regulatory changes. With global business insolvencies expected to rise by 2.8% by 2026, CreditInsurance.com provides coverage in nearly 200 markets to mitigate these challenges. Non-payment risks, which contribute to 1 in 4 business failures, are addressed through integrated credit insurance solutions. By combining insurance with predictive analytics, businesses can strengthen their internal credit checks while safeguarding their financial stability.

This dual focus on protection and insight enables companies to navigate risks effectively while planning for long-term growth.

Supporting Business Growth

CreditInsurance.com doesn’t just protect businesses – it helps them grow. By leveraging insured receivables, companies can secure more favorable financing terms from lenders, making it easier to access competitive funding. This is especially beneficial for businesses looking to expand into new markets or extend flexible payment options to clients.

The platform also offers tools for monitoring the financial health of trading partners. Predictive scoring, backed by a database of over 200 million companies, helps businesses detect warning signs of insolvency early. By combining these insights with ethical AI frameworks that emphasize reliability, accountability, fairness, and transparency (RAFT), companies can pursue ambitious growth strategies without compromising ethical integrity.

Conclusion

Building trust and transparency is at the heart of ethical predictive credit analytics. As Rohit Chopra, Director of the CFPB, emphasized:

"Creditors must be able to specifically explain their reasons for denial. There is no special exemption for artificial intelligence".

This underscores the need for businesses to move away from opaque "black box" models and adopt explainable AI systems. These systems ensure customers, regulators, and stakeholders can clearly understand the decision-making process.

The numbers tell a compelling story – nearly 70% of finance professionals believe AI use should be limited due to a lack of transparency and trust. Ignoring these ethical concerns can result in unqualified approvals, increased default rates, and long-term instability. To address this, businesses can implement regular fairness audits, document the reasoning behind model inputs, and explore Less Discriminatory Alternatives (LDAs) that maintain accuracy while reducing bias. This proactive approach ensures accountability and fosters trust in AI operations.

Creating trust also means establishing strong governance frameworks that focus on reliability, fairness, and transparency. This involves testing models throughout the credit decision process, incorporating human oversight for critical decisions, and setting up automated safeguards to catch errors or inconsistencies at their source.

CreditInsurance.com steps in as a valuable partner in this journey. The platform offers educational tools and risk management solutions to help businesses navigate these challenges. By combining ethical predictive analytics with credit insurance, companies can protect themselves from payment defaults while offering flexible credit terms that encourage growth. CreditInsurance.com’s focus on model explainability, fairness audits, and regulatory compliance ensures businesses can integrate advanced analytics with robust oversight.

Ultimately, businesses that prioritize ethics, transparency, and practical resources position themselves to thrive in a regulated marketplace. By aligning ethical predictive analytics with effective risk management strategies, companies can achieve sustainable growth and resilience.

FAQs

How can I tell if a credit AI is biased?

To spot bias in a credit AI system, focus on its fairness and transparency. One way to measure fairness is by using metrics such as disparate impact or demographic parity, which compare outcomes for different protected groups. Additionally, explainable AI methods can shed light on how the system makes decisions, helping to reveal any hidden biases.

It’s also crucial to keep an eye on the model’s performance over time. Regular monitoring ensures the system doesn’t unintentionally disadvantage specific groups. Finally, adhering to regulatory guidelines for oversight and validation is key to maintaining fairness throughout the system’s lifecycle.

What must an AI denial notice explain under U.S. law?

Under U.S. law, when a credit application is denied by an AI system, the denial notice must clearly outline the primary reasons for the decision. It’s not enough to use vague or generic explanations – specific factors that led to the denial must be identified. This transparency is essential for helping consumers understand why their application was denied and ensures compliance with fair lending laws, such as the Equal Credit Opportunity Act (ECOA). For instance, instead of using a broad term like "insufficient income", the notice should detail the specific data points or criteria that influenced the decision.

How does “machine unlearning” work for privacy?

Machine unlearning is a process designed to remove the influence of specific data from machine learning models. This approach helps safeguard privacy and ensures compliance with regulations like the GDPR’s "Right to be Forgotten." Instead of retraining the model entirely from scratch, machine unlearning adjusts the model to effectively "forget" the targeted data.

Techniques such as exact unlearning and differential privacy are commonly used in this process. These methods ensure that the removed data no longer affects the model’s predictions. This is particularly important in sensitive areas like credit scoring and financial services, where privacy concerns and regulatory requirements are paramount.